Artificial Intelligence (AI) is reshaping workplace safety in the UK. This guide explores how AI can enhance health and safety, while addressing the ethical considerations essential for responsible use. Every health and safety professional must now understand AI workplace safety UK regulations and best practices.

AI’s potential spans predictive analytics to automated monitoring, offering powerful tools to reduce risks and prevent accidents. Yet, leveraging this technology demands a clear strategy, adherence to legal frameworks, and ethical deployment. This article outlines practical steps for integrating AI into safety management systems effectively and responsibly.

Understanding AI’s Potential in UK Workplace Safety

AI brings innovative solutions that can transform traditional health and safety practices. Predictive analytics stands out as a key benefit. By analysing large datasets, AI identifies patterns and predicts hazards before they occur, shifting safety management from reactive to proactive. For instance, AI can monitor equipment performance to predict maintenance needs, preventing machinery failures and accidents.

Enhanced risk assessment is another advantage. AI processes complex information like incident reports, near-miss data, environmental conditions, and worker behaviour to provide a detailed risk picture. This helps organisations allocate resources more effectively and implement targeted preventative measures. Additionally, AI-powered systems monitor compliance in real-time, flagging safety protocol deviations or unsafe practices like incorrect PPE use. These capabilities promise to revolutionise risk management and operational safety.

Navigating the UK Legal and Regulatory Framework for AI

The UK’s approach to regulating AI in the workplace is evolving, focusing on safe and responsible deployment. While no single AI-specific law exists, existing health and safety legislation provides a framework. The Health and Safety at Work etc. Act 1974 (HSWA) requires employers to ensure employee health, safety, and welfare, extending to the use of technology like AI.

The Management of Health and Safety at Work Regulations 1999 (MHSWR) emphasises risk assessments. Employers must assess risks and take action to control them. Introducing AI requires assessing new risks like data privacy, algorithmic bias, or system failures, alongside changes to existing risks. The HSE provides guidance on AI use, stressing that new technologies must meet safety standards.

Specific regulations apply depending on the AI’s application. For example, the Provision and Use of Work Equipment Regulations 1998 (PUWER) requires machinery to be safe, suitable, and maintained. Data protection laws, like the UK GDPR, are critical when AI collects personal data, especially with biometric data or employee monitoring.

Ethical Considerations in AI Deployment for Safety

Ethical considerations are vital when deploying AI in workplace safety. Algorithmic bias is a major concern. If AI systems are trained on biased data, they may discriminate against certain groups, leading to unequal safety outcomes. For instance, facial recognition systems for PPE compliance might perform less accurately on individuals with certain skin tones.

Transparency and explainability are also essential. Workers and safety professionals need to understand how AI systems make decisions, particularly when these impact safety or employment. A “black box” approach, where the AI’s reasoning is unclear, can erode trust and hinder error correction. Organisations should aim for AI systems that provide clear, understandable explanations.

Data privacy and surveillance are further ethical dilemmas. While AI can monitor workplaces for safety hazards, it risks infringing on employee privacy and fostering distrust. Balancing safety monitoring with privacy respect is crucial. Clear data collection, storage, and use policies, along with robust consent mechanisms, are necessary.

Accountability is another key principle. When an AI system’s decision leads to harm, responsibility must be clear – whether it lies with the developer, deployer, or operator. Establishing accountability is essential for ethical governance and learning from AI-related incidents.

How to Implement AI in Your Safety Management System

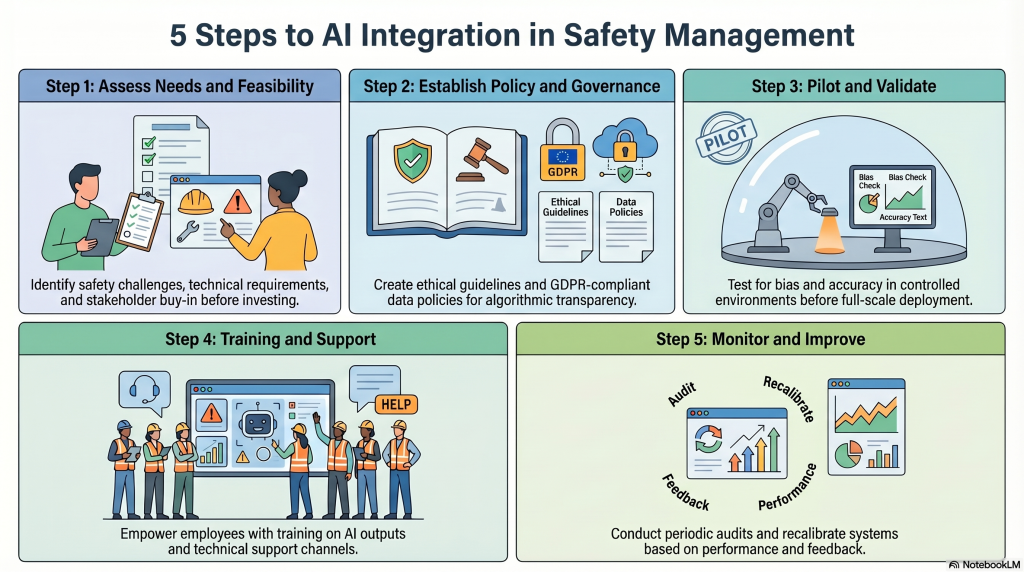

Step 1: Conduct a Comprehensive Needs Assessment and Feasibility Study

Before introducing AI, identify specific safety challenges it can address. Determine where traditional methods fall short or where AI can offer improvements. For example, are you experiencing high rates of slips, trips, and falls, or recurring equipment failures? Research AI technologies and assess their suitability. Consider technical feasibility, infrastructure requirements, and potential return on investment. Engage stakeholders, including safety officers, IT departments, and employees, to gather input and secure buy-in.

Step 2: Develop a Robust AI Safety Strategy and Policy

Create a formal strategy outlining your approach to AI in safety, including objectives, scope, and ethical principles. Develop clear data governance policies, ensuring compliance with UK GDPR. Establish guidelines for algorithmic transparency and explainability, enabling workers to understand AI decisions. Define accountability for AI system performance and incidents. Integrate this policy into your existing safety management system.

Step 3: Pilot, Test, and Validate AI Solutions

Before full deployment, pilot AI solutions in a controlled environment. Test the system’s accuracy, reliability, and effectiveness in identifying hazards and preventing incidents. Watch for biases in the AI’s algorithms and data. Validate performance against safety metrics and benchmarks. Document testing procedures, results, and adjustments. This iterative process ensures the AI is fit for purpose and safe for wider use.

Step 4: Provide Comprehensive Training and User Support

Successful AI integration depends on user adoption. Train employees who will interact with or be affected by the AI system. Explain how it works, its benefits, limitations, and how to interpret its outputs. Educate staff on data privacy policies. Establish clear support channels for technical assistance and reporting errors. Empowering employees fosters effective use and a positive safety culture.

Step 5: Monitor, Evaluate, and Continuously Improve

AI deployment requires ongoing monitoring and evaluation. Regularly review the system’s performance against safety objectives. Collect user feedback to identify areas for improvement. Stay updated on AI developments and regulatory changes. Update or recalibrate AI systems as new data emerges or operational needs evolve. Conduct periodic audits to ensure compliance with legal and ethical standards. Continuous improvement keeps the AI system effective and aligned with organisational goals.

Practical Checklist for AI Implementation in Workplace Safety

- Define Clear Objectives: Articulate the safety challenges AI will address and expected outcomes. This ensures the AI solution is purposeful and aligns with safety goals.

- Conduct a Thorough Risk Assessment: Identify risks associated with the AI system, including data privacy, algorithmic bias, system failure, and cybersecurity threats. Integrate this into broader risk management.

- Ensure Data Quality and Relevance: Verify that training and operational data is accurate, relevant, unbiased, and compliant with data protection laws. Poor data leads to flawed AI outputs.

- Establish Transparency and Explainability: Ensure AI decision-making is understandable to human operators and stakeholders. This builds trust and enables effective oversight.

- Develop Clear Accountability Frameworks: Define responsibility for AI system performance, maintenance, and incidents. Clear accountability is essential for governance.

- Implement Robust Cybersecurity Measures: Protect AI systems and data from cyber threats, unauthorised access, and manipulation. Breaches can have serious safety implications.

- Provide Adequate Training and Support: Train employees on interacting with, interpreting, and troubleshooting the AI system. Establish ongoing support mechanisms.

- Conduct Pilot Testing and Validation: Pilot test AI in a controlled environment before full deployment to validate effectiveness and identify issues. Minimise risks with new technology.

- Monitor Performance and Review Regularly: Continuously monitor AI performance against safety metrics and conduct periodic reviews. Adapt the system as needed.

- Engage Employees and Stakeholders: Involve employees, safety representatives, and stakeholders throughout AI implementation. This fosters acceptance and addresses practical considerations.

Conclusion

AI integration offers transformative opportunities for UK workplace safety. By navigating legal frameworks, addressing ethical considerations, and following structured implementation steps, organisations can harness AI responsibly. Focusing on transparency, accountability, and continuous improvement ensures AI becomes a valuable tool, fostering safer and healthier working environments for all employees.

Frequently Asked Questions

What are the key benefits of AI in UK workplace safety?

AI offers several benefits, including predictive analytics to identify hazards before they occur, enhanced risk assessments by analysing complex datasets, and real-time monitoring of safety compliance. These tools help shift safety management from reactive to proactive approaches, reducing risks and preventing accidents effectively.

Does the UK have specific laws regulating AI in workplace safety?

Currently, there is no single AI-specific law in the UK. However, existing health and safety regulations, such as the Health and Safety at Work Act 1974, apply to AI deployment. Employers must ensure AI systems are used responsibly and comply with these legal frameworks to maintain workplace safety.

How can AI improve risk assessments in the workplace?

AI enhances risk assessments by processing complex information, such as incident reports, near-miss data, environmental conditions, and worker behaviour. This provides a detailed risk picture, enabling organisations to allocate resources effectively and implement targeted preventative measures.

What ethical considerations should employers address when using AI for safety?

Employers must ensure AI is deployed ethically by maintaining transparency, avoiding bias in decision-making, and protecting worker privacy. Responsible use of AI involves clear strategies and adherence to ethical guidelines to balance technological benefits with employee trust and fairness.

Can AI help monitor compliance with safety protocols?

Yes, AI-powered systems can monitor compliance in real-time by flagging deviations from safety protocols, such as incorrect PPE use or unsafe practices. This capability helps organisations address issues promptly and maintain a safer working environment.

Is predictive maintenance a viable application of AI in workplace safety?

Absolutely. AI can monitor equipment performance to predict maintenance needs, preventing machinery failures and accidents. This proactive approach reduces downtime and enhances overall workplace safety by addressing potential hazards before they escalate.